AI Agent Orchestration Platform

Define agents in YAML. Discover peers via capability cards. Compose teams. Stream responses. Ship a single binary. AgentGo brings compiled-language performance and typed multi-agent collaboration to agentic AI.

Private repository — contact us for access

Why AgentGo?

Purpose-built to solve the real pain points of running AI agents in production

The Problem

Heavy Python Runtimes

Multi-GB Docker images, slow cold starts, GIL-limited concurrency. Every agent deployment becomes an infrastructure burden.

Poor Concurrency Models

Thread pools and async/await don't scale. Running 100+ concurrent agents means fighting your runtime, not building features.

No Built-in Observability

Bolting on monitoring after the fact. Custom metrics, tracing, and audit logging are afterthoughts in most frameworks.

Configuration Sprawl

Agent definitions scattered across code, environment variables, and deployment configs. No single source of truth.

The AgentGo Solution

Compiled Go Binary

~30MB statically-linked binary. Sub-second cold starts. No interpreter, no virtual environment, no dependency hell.

Goroutine Worker Pool

Native goroutine-based scheduler handles thousands of concurrent agents. Zero contention, built-in backpressure, graceful shutdown.

130+ Prometheus Metrics

Instrumented from day one. Pre-built Grafana dashboards, JSONL audit logs, structured logging, and cost tracking out of the box.

Single YAML Per Agent

One file defines the agent: model, tools, prompts, permissions, guardrails. Hot-reload on change. Pack system for distribution.

Platform Features

Everything you need to build, deploy, and operate AI agents at scale

Multi-LLM Providers

Gemini, OpenAI, Anthropic, and LiteLLM proxy. Switch providers per-agent via config. Streaming and function calling across all backends.

Flexible Agents

YAML-defined agents with prompt templates, tool bindings, and schema validation. Pack system for reusable agent bundles. Subagent delegation.

Workflow Engine

Sequential, parallel, and conditional execution. Dynamic supervisor pattern with event-driven orchestration. Visual diagram generation.

Durable Agents

Persistent, event-driven agent instances with SQLite checkpoints. Crash recovery, pause/resume, mailbox messaging, and approval workflows.

Chat & Streaming

Multi-turn chat sessions with SSE token streaming, idle watchdog, and parallel non-blocking tool dispatch. Interactive CLI REPL plus a React + TypeScript Web Console (chat, workflows, durable agents, schedules, mermaid diagrams).

Tool Ecosystem

Native Go tools, YAML-configured tools, shell scripts, MCP server integration, and connector-backed integrations (T1/T2/T3 authoring tiers). Skill-provided tools with schema validation and timeouts.

Scheduling & Triggers

Cron scheduling with SQLite persistence. Webhook triggers with HMAC validation. Timer-based and poll-based event sources for durable agents.

Observability

130+ Prometheus metrics with pre-built Grafana dashboards. Structured JSON logging via Loki, JSONL audit trails per tool call, and a W3C-conformant cross-agent span tree (/v1/traces/{id}) with cost roll-up to the root caller.

Security & Guardrails

API key authentication, filesystem and network permission sandboxing. Cost guardrails with per-agent budgets. Rate limiting and circuit breakers.

Skills System

Self-contained knowledge packages with trigger-based discovery. Skills bundle instructions, tools, and dependencies with SemVer compatibility. Supports remote MCP/HTTP sources and inter-skill dependencies.

Multi-Agent Collaboration

Capability cards expose typed intents, JSON-Schema I/O, and ACL matchers. Peers discover each other via discover_agents/route_intent and call call_agent with W3C trace propagation, idempotency, per-target/per-caller token-bucket quotas, and end-to-end cost roll-up. Declarative teams compose planner-workers and pipeline protocols (contract-net + swarm flag-gated); subagents and 3-hop handoff remain for in-session coordination.

Integrations & Connectors

Config-driven outbound integrations. Pluggable secret backends (env, file, Vault, AWS-SM, GCP-SM), hot-reload, boot-time scope enforcement, and per-principal impersonation. Built-ins: GitHub, Postgres, generic REST. Codegen from OpenAPI.

Native DB Tools

First-class database access without LLM overhead. Schema-validated CRUD, raw parameterized SQL, and audit. Per-table access policies with grammar-validated row-filter expressions (32 SQL injection vectors rejected). SQLite + Postgres dialects.

Memory & Context

Per-agent persistent memory with four typed scopes (user, feedback, project, reference) and MEMORY.md indexing. Automatic stale-output pruning, LRU tmp file eviction, and pre-compaction LLM extraction so long sessions stay coherent.

Channel Adapters

Slack (HMAC-SHA256, Block Kit) and Discord (Ed25519, deferred ack) out of the box. ChannelRouter with per-instance busy flag eliminates head-of-line blocking; exponential-backoff retry with crash recovery and dead-letter inspection.

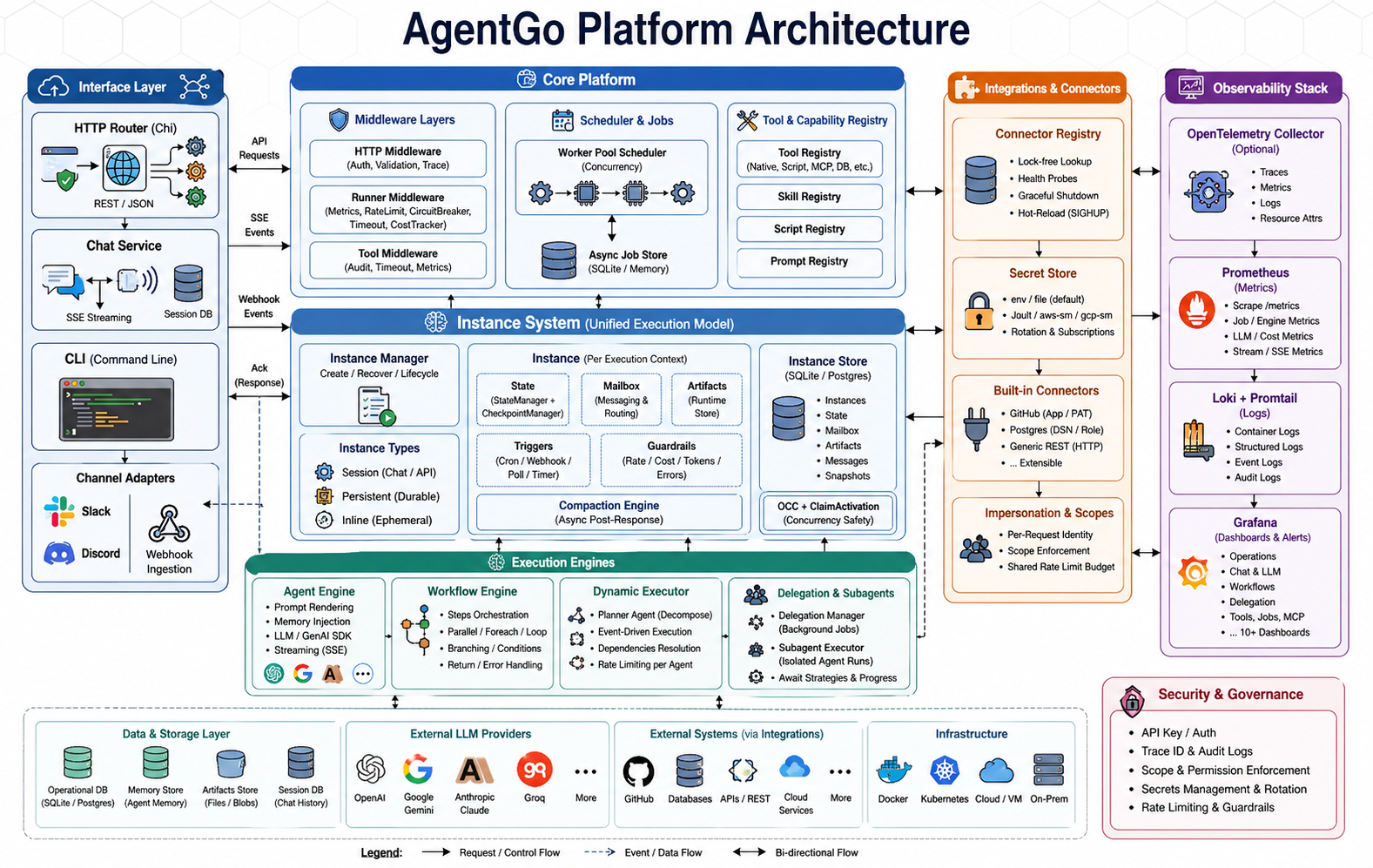

Architecture

A modular, layered architecture designed for extensibility and production reliability

Request → Router → Handler → Scheduler → Agent → GenAI ↓ ↓ WorkflowEngine ToolRegistry ↓ ↓ ChatService DelegationManager ↓ ↓ SSE Streaming CronScheduler ↓ DurableManager → Instance(s) → Orchestrator

Define Agents in YAML

No boilerplate code. One file defines your agent's model, behavior, tools, and constraints.

name: researcher description: Deep research agent with web access model: gemini-3-flash-preview provider: gemini prompt_template: research.tmpl system_prompt: | You are a thorough research assistant. Always cite sources and verify claims. tools: - web_search - read_file - write_file parameters: temperature: 0.3 max_tokens: 8192 max_iterations: 15 permissions: filesystem: allowed_dirs: ["/data/research"] network: allowed_domains: ["*.google.com"]

id: Jarvis name: Jarvis Agent version: "2.0.0" model: gemini-3.1-pro-preview tools: [save_memory, read_memory, delegate, subagent, ...] memory: enabled: true scopes: [global, self] inject_mode: index_only durable: enabled: true auto_start: true objective: | Persistent personal AI assistant — briefings, tasks, and inbox handling. activation_timeout: 10m cooldown: 5s max_activations_per_hour: 120 max_tokens_per_activation: 50000 triggers: - type: cron expr: "0 8 * * 1-5" input: '{"reason": "morning_briefing"}' state: initial: tasks: [] notes: {} eviction: tasks: { max_items: 200 } notes: { max_items: 100 } mailbox: severity_routing: critical: ["outbox"] warning: ["outbox"] permissions: fs: ["read:configs/", "write:data/sessions/"] create: [skill, agent, workflow] approval_mode: review

name: research-pipeline description: Multi-step research with review steps: - name: gather agent: researcher input: "Research: {{.query}}" - name: analyze agent: analyst input: "{{.steps.gather.output}}" depends_on: [gather] - name: review parallel: - agent: fact-checker input: "{{.steps.analyze.output}}" - agent: editor input: "{{.steps.analyze.output}}" - name: publish agent: writer input: "{{.steps.review.outputs}}" condition: "{{.steps.review.all_passed}}"

Declarative Configuration

Every agent aspect is defined in YAML: model selection, prompt templates, tool bindings, iteration limits, and safety permissions. No Go code required to create new agents.

Hot Reload

Edit YAML, send SIGHUP. Agent configs reload without restarting the server. Zero-downtime updates to prompts, tools, and parameters.

Pack System

Bundle agents with their prompts, tools, and skills into distributable packs. Import community packs or create your own via manifest.yaml.

Built-in Guardrails

Filesystem sandboxing, network restrictions, cost budgets, and iteration limits are first-class config. Security is declarative, not an afterthought.

AI-Powered Configuration

Describe what you need in plain English. The Config Manager agent builds production-ready configs through conversation.

$ agentgo chat start config-manager

Session started · config-manager · gemini-3.1-pro-preview

You:

Create a sentiment analysis agent that monitors

Twitter mentions and alerts on negative trends

Config Manager:

I'll create a complete stack for that:

1. Agent sentiment-monitor with twitter-search,

sentiment-analyze, and slack-notify tools

2. Workflow sentiment-pipeline with

fetch → analyze → alert steps

3. Cron trigger every 15 minutes

4. Cost budget $5/day

Shall I proceed? [approve] [edit] [cancel]

You: approve

✓ Created agent sentiment-monitor

✓ Created workflow sentiment-pipeline

✓ Validated all configs

✓ Hot-reloaded — agent is live

Conversational Setup

Describe your business needs in plain English. The Config Manager translates requirements into valid YAML — agents, workflows, tools, and prompts.

Safe Deployment

Automatic validation, backup, and hot-reload with health checks. Approval gates for sensitive operations ensure nothing goes live without your sign-off.

Full Stack Generation

Creates agents, workflows, tools, and prompts in one session. Pack-aware bundling groups related configs for portable deployment.

Audit Trail

Every change is validated, backed up, and logged. Rollback information preserved so you can undo any configuration change.

LLM Providers

First-class support for major LLM providers with a unified interface

Token Streaming

Real-time SSE streaming across all providers. Consistent callback interface regardless of backend.

Function Calling

Unified tool/function schema. Provider-specific adaptors handle format differences transparently.

Provider Failover

RetryLLMCall with exponential backoff. Switch providers per-agent without code changes.

Performance & Deployment

Compiled for speed, packaged for simplicity

Docker

Minimal Alpine-based image. Single container, production-ready.

Compose Stack

Modular: AgentGo + Postgres + Prometheus + Grafana + Loki. Independent up/down per layer.

Single Binary

Download and run. Embedded configs and resources. No install step.

Hot Reload

SIGHUP reloads agent and workflow configs. Zero-downtime updates.

Get Started

From zero to running agents in three steps

Clone & Build

# Request access first git clone <repo-url> make build

Configure

export GEMINI_API_KEY=your-key # Edit configs/agents/*.yaml

Run

./agentgo serve # Or: make docker-run

Private repository — contact us for access